Experienced security practitioners develop something like a sixth sense for context.

They notice when a session is getting cluttered, too many log dumps, too many investigative threads, before their agent starts giving confused or generic answers. They recognize the early warning signs and take action proactively rather than reactively.

This is pattern recognition that develops with practice. In security work, where the data is dense and the stakes are high, developing this intuition is a core skill. But it also reveals something about design. The answer to context pressure isn't just "clear your session and start over." Two architectural decisions can mitigate the problem before it starts: preprocessing raw data so agents never see the noise, and designing multi-agent systems where no single agent has to carry the full weight of an investigation. Understanding why both matter starts with understanding context itself.

The attention reality

An agent doesn't give equal attention to everything in its context window. Even though all that content is technically "there," some of it gets more focus than the rest.

Agents pay more attention to content at the beginning of the context (system prompts, instructions, tool definitions) and content at the end (recent exchanges, the latest query). Content in the middle, older conversations, log files you loaded earlier, command outputs from three threads ago, gets progressively less attention.

This is sometimes called the "lost in the middle" phenomenon, after a 2023 Stanford paper that demonstrated it across multiple LLM architectures.

For security work, this has real consequences. That constraint you established earlier, "we're only looking at lateral movement from this specific host," is still in context, technically. But the agent might not be actively considering it when it's buried under hundreds of lines of Sysmon events and network flow data. It starts reasoning broadly when you need it reasoning narrowly.

This is why you sometimes see an agent "forget" the scope of an investigation mid-session, even when you defined it clearly at the start. The information is there. It's just not getting enough attention.

When context is healthy

When context is healthy in a security session, you'll notice:

- The agent remembers your investigation scope without being reminded

- Detection logic suggestions are specific to your environment, not generic templates

- The agent doesn't re-ask about log sources or data schemas you already provided

- Each analytical step builds on the previous one and the reasoning chain stays coherent

When you see these signs, context is fine. Keep working.

Recognizing context degradation

Context doesn't fail all at once. It degrades on a spectrum, and the earlier you catch it, the less work you lose.

Early signs are subtle. Responses lose specificity. Where the agent used to reference your actual field names and log sources, now it's giving generic advice. It re-asks about things you already covered. Switching investigative threads (from DNS beaconing to process execution on a suspect host) introduces friction as context from one thread becomes noise for the other.

Late signs are unmistakable. The agent contradicts your investigation scope. It forgets constraints you explicitly set. It mixes details from different lines of inquiry into incoherent responses.

In the moment, when you're mid-investigation and context is slipping, the immediate response is straightforward: early signs mean compact or refocus, late signs mean stop and restart the current thread fresh. Don't push through degraded context. It only gets worse.

But this is triage, not a solution. If you're regularly hitting context walls, that's a design problem, and the rest of this article is about what to do about it.

Why security work hits context so hard

Security data has characteristics that make context management especially tricky.

Log files are context hogs. A single Sysmon export can be thousands of lines. A packet capture summary, a Zeek conn.log slice, a Windows Event Log dump. Each one can consume more context than an entire source code file. Load raw logs without filtering, and you'll fill the window fast with mostly noise.

Investigations sprawl by nature. When you're triaging an alert or hunting a threat, it's natural to follow leads. But each lead adds context: new logs, new hosts, new hypotheses. Before you know it, you've accumulated context from three different investigative threads, and the agent is trying to reason across all of them at once.

Detection rules have deep dependencies. When you load a Sigma rule to analyze, you might also need the lookup tables it references, the baseline it compares against, the MITRE technique documentation, and raw log samples showing what the attack actually looks like. One rule can easily pull in five supporting artifacts.

Being aware of these patterns helps you work more deliberately. But awareness alone points to something deeper about how agentic security systems should be designed.

The case for prefiltering

This is the architectural lesson that context management teaches you: raw security data should never go straight to an agent.

Think about what happens when you pipe unfiltered logs directly into an agent's context. Endpoint telemetry arrives from Sysmon, thousands of events per host per hour. Network sensors produce connection logs, DNS queries, HTTP transactions. SIEM alerts fire across the environment. If all of that lands in an agent's context window raw, the agent isn't "powerful." It's drowning.

The right design puts deterministic code in front. A preprocessing layer, something like a receptor, that ingests raw telemetry, cleans it, normalizes it, and harmonizes field names across sources. It does the compute-heavy work that deterministic code does best: correlating network connections into sessions, computing statistical baselines, identifying communication patterns, deduplicating redundant events. None of this requires reasoning. It requires fast, reliable data wrangling at scale.

Then when an agent actually needs data, to analyze a suspicious pattern, to investigate an alert, to reason about whether observed behavior matches a known attack chain, it requests a specific slice. Filtered and relevant. The agent's context window gets exactly what it needs and nothing more.

This is the separation that matters. The deterministic layer handles volume at speed with perfect reliability. The agent reasons over relevant context with the full depth it's capable of.

Skip that preprocessing step and you're burning tokens on noise, degrading the agent's reasoning quality, and building a system that costs more while doing less.

The case for multi-agent design

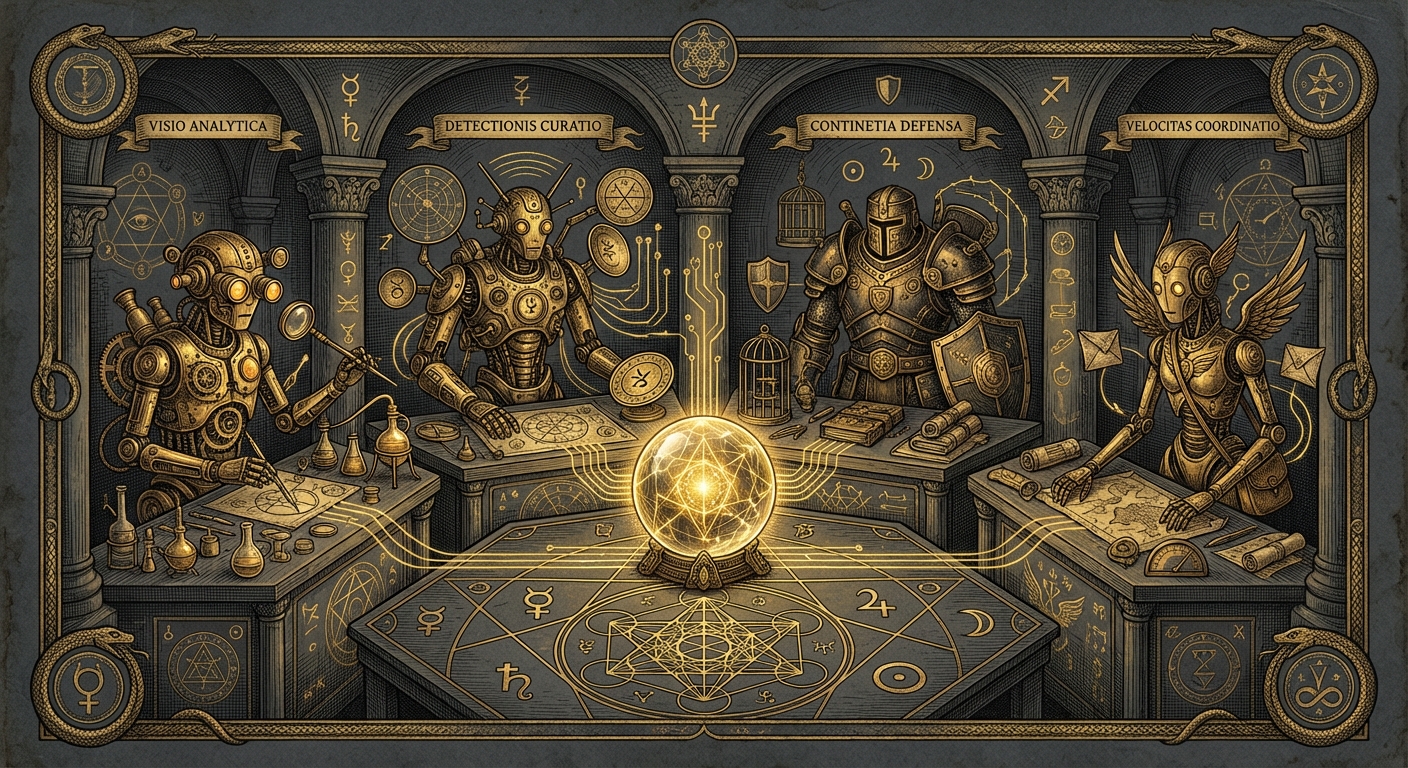

Prefiltering solves the problem of what data reaches agents. But there's a second question: how many agents should that data reach?

The instinct is to build one powerful agent that handles an entire investigation. It triages the alert, pulls the logs, analyzes behavior, checks against known attack chains, and writes the verdict. And for simple cases, that can work. But investigations aren't simple. They branch. One suspicious process spawns three lines of inquiry: network connections, parent process lineage, file system activity. If a single agent is chasing all three, its context fills with competing threads. The DNS beaconing evidence starts interfering with the process tree analysis. Scope bleeds across threads, and you get exactly the degradation we talked about earlier.

The alternative is to split the work. An orchestrator agent manages the investigation at a high level. It identifies lines of inquiry and dispatches sub-agents, each with a narrow, focused task: "analyze the network connections from this host during this time window," "trace the process tree for this PID," "check these file hashes against known malware." Each sub-agent works with a clean, minimal context. It does its job and reports back a summary.

The orchestrator never sees the raw logs. It sees summaries from agents that did. Its context stays lean: the investigation scope, the questions it asked, and the answers it got back. It can reason about the full picture without drowning in the details.

You can take this further. A final decision-making agent receives the orchestrator's compiled findings and makes the call: is this malicious, benign, or inconclusive? This agent's context is even more focused, just the distilled evidence and the question. It doesn't carry the baggage of the investigation process. It just evaluates what the investigation found.

This is how context stays healthy at scale. No single agent carries more than it can handle. The preprocessing layer keeps the noise out. The multi-agent architecture keeps the scope tight. Together, they let each agent do its best reasoning on exactly the data it needs.

Design for context, don't fight it

Context windows will get larger. Models will get better at attending to middle content. The reactive workarounds we use today, compacting, resetting, restarting threads, some of those will matter less over time. But the design principles won't change.

Even with a million-token context window, you still don't want an agent wading through thousands of irrelevant log entries to find the ten that matter. Even with perfect attention distribution, you still don't want a single agent juggling five investigative threads when five focused agents could each handle one cleanly. Preprocessing and multi-agent architecture aren't workarounds for current limitations. They're how you build systems that use every token well, minimize inference cost, and let agents do their best reasoning regardless of how much headroom the model gives you.

Context will always be a resource worth spending carefully. The teams that treat system design as the answer, rather than fighting context session by session, are the ones building agentic security systems that actually scale.