You're setting up reference documents for your AI coding assistant—Sysmon event fields, Zeek log formats, your detection rule syntax. Then you pause.

"Doesn't Claude already know what Sysmon Event 3 fields are? Why bother with reference documents for information the model was trained on?"

Fair question. And the answer changes how you approach security work with AI agents.

The Tolerance for Error Problem

Yes, Claude often has this information from training. For general explanations, high-level concepts, and common patterns, it does remarkably well from memory.

But "often" and "remarkably well" aren't good enough when you're building detection logic where a wrong field name means a rule that silently fails to catch threats.

The LLM's power lies in reasoning, judgment, context-awareness, and synthesizing information—not in perfectly recalling exact technical specifications word-for-word.

It might remember that Sysmon Event 3 has something to do with network connections, but misremember whether the field is DestinationIp or DestIP or dest_ip.

For most conversations, that's fine. For detection rules that run in production, it's not.

The Strategic Division

Think of it this way: you'd trust a skilled analyst's judgment about whether a behavior is suspicious, but you'd still want them to double-check the exact log field names against documentation rather than going from memory.

Same principle here.

Reference documents serve as authoritative ground truth for the details that absolutely must be correct. They remove the possibility of subtle hallucinations in places where subtle errors cause real problems.

Claude's reasoning decides what to do with the information. The reference document ensures the specific technical details are accurate.

What This Looks Like in Practice

For security work, your reference documents might include:

Telemetry references:

- Sysmon event IDs and their key fields

- Zeek log types and column names

- Your SIEM's field naming conventions

Detection patterns:

- Your organization's Sigma rule syntax

- Custom detection framework schemas

- Threshold values for specific alert types

Environment specifics:

- Network topology and IP ranges

- Known-good processes and behaviors

- Baseline patterns that shouldn't trigger alerts

When Claude needs to write a detection rule, it reads the relevant reference first. Now it's reasoning about what behavior to detect (its strength) while having exact field names and syntax in front of it (your reference document's job).

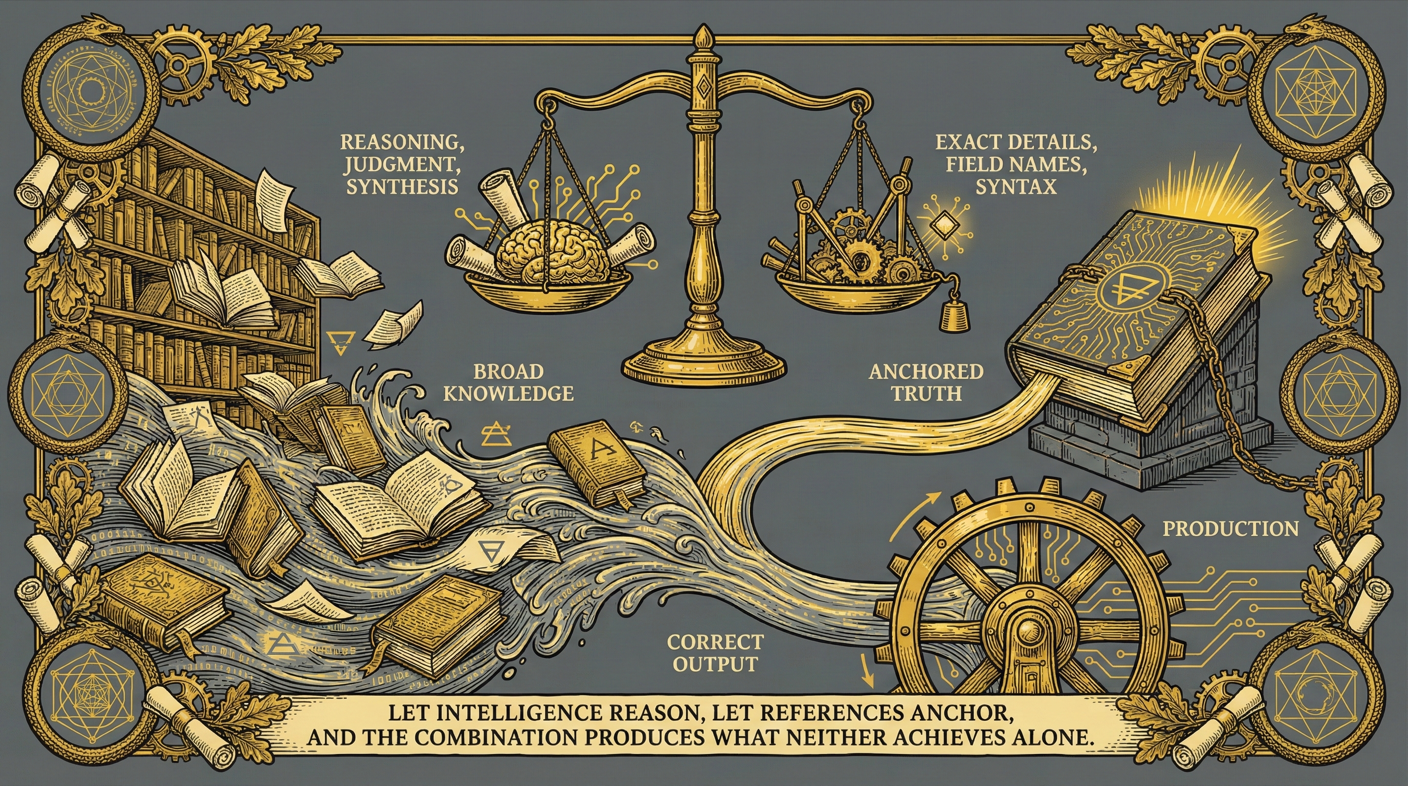

The General Strategy

This is a pattern worth internalizing beyond just security work:

Use reference documents to anchor technical specifics, and let Claude's intelligence handle the reasoning and judgment on top of that anchored truth.

The more critical the accuracy of specific details—field names, API endpoints, configuration syntax—the more valuable it is to provide authoritative references rather than relying on training data recall.

Claude's training gives it broad knowledge. Your references give it precision where precision matters.

Key Takeaways

Training data enables reasoning — The LLM understands concepts and can reason about them, but may not recall exact specifications perfectly.

Reference documents ensure precision — For details that must be exactly right (field names, syntax, schemas), provide authoritative references.

Combine both strategically — Let the AI reason about what to do while grounding it in accurate technical specifics.

Match tolerance to stakes — General explanations can rely on training; production detection rules need reference documents.