You've heard the fear: AI will replace security practitioners. You've heard the hype: AI will do everything for us.

Neither is accurate. The reality is more nuanced and more useful: AI coding assistants change what you do, not whether you're needed.

The most effective approach isn't "AI does the work" or "I do the work"—it's a deliberate partnership where each party contributes their strengths. Understanding this division of labor is what separates productive collaboration from frustrating fights with the tool.

The New Division of Labor

Think of the partnership like this:

- Pilot and autopilot — Human sets direction, AI handles execution

- Architect and construction crew — Human designs, AI builds

- Editor and writer — Human refines, AI produces

You're still essential. Your role has shifted.

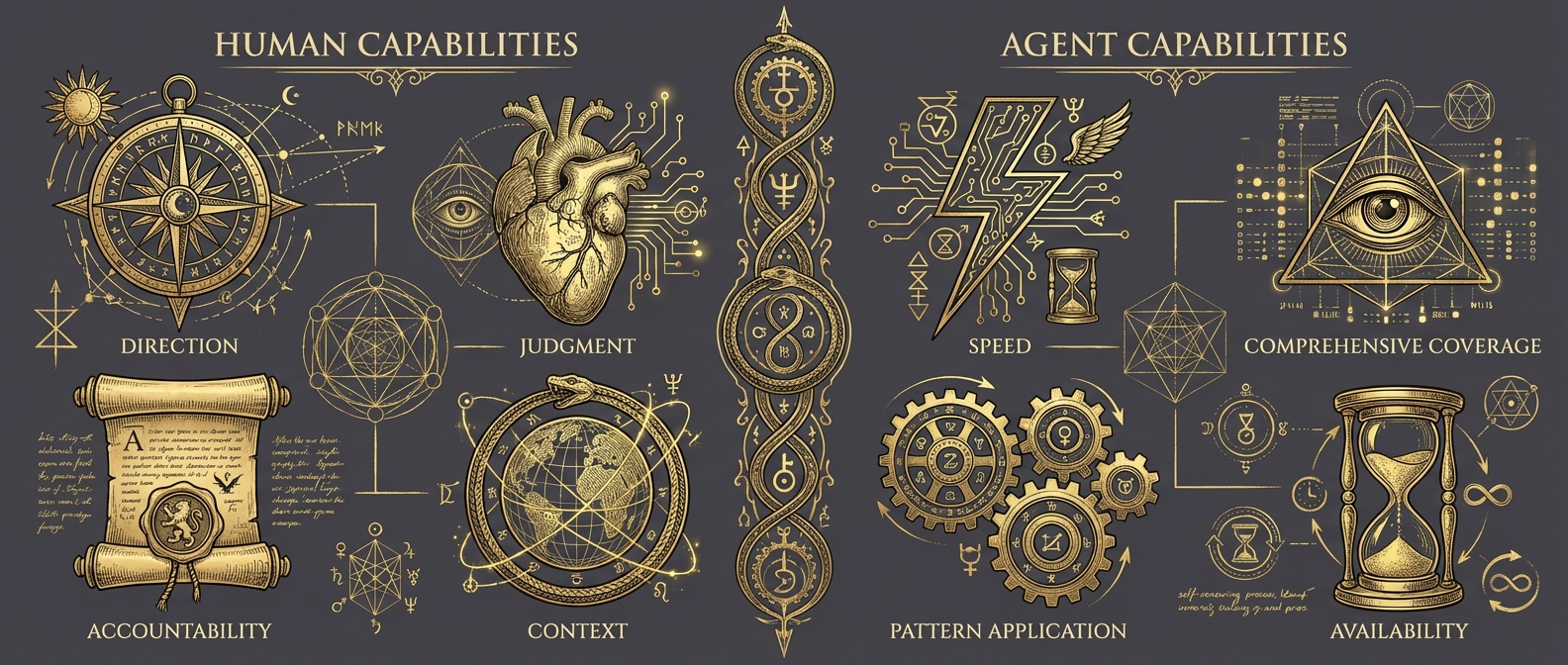

What You Bring

Intent and Direction

The agent doesn't know what to build. You do.

- What problem are we solving?

- What does success look like?

- What constraints matter?

- What's the priority?

Without your direction, the agent has no purpose. It can execute brilliantly but can't decide what to execute.

Security context: The agent doesn't know which threats matter to your organization, what your risk tolerance is, or which assets are crown jewels. You provide that strategic context.

Judgment and Taste

The agent can produce options. You decide which is right.

- Is this the right approach?

- Does this fit our architecture?

- Will the team understand this?

- Is this over-engineered?

Judgment comes from experience, context, and understanding of the broader picture—things the agent simply doesn't have access to.

Security context: Is this detection rule too noisy for your environment? Will this response action cause acceptable business impact? These are judgment calls that require understanding your specific context.

Accountability and Care

The agent doesn't feel ownership. You do.

- Will this work in production?

- Is this secure enough?

- What did we miss?

- Can we defend this decision?

You sign your name to the outcome. That accountability drives a level of care that agents can't replicate.

Security context: When an incident occurs, you're accountable for the response. The agent assisted, but the decision trail leads to you.

Context and Constraints

The agent sees the codebase. You see the world around it.

- The stakeholder who hates complexity

- The deadline that can't move

- The junior analyst who'll maintain this

- The legacy SIEM we can't replace yet

These constraints aren't in any file. They live in your head.

What the Agent Brings

Speed and Tirelessness

Agents don't get tired, distracted, or bored.

- Generate ten variations instantly

- Search entire codebase in seconds

- Apply changes to a hundred files consistently

- Iterate until tests pass

Tasks that would exhaust you are trivial for agents.

Security context: Searching a million-line log file for anomalies. Generating test cases for every input permutation. Checking every dependency for known vulnerabilities.

Comprehensive Coverage

Agents don't forget or skip things.

- Check every file for the pattern

- Handle every edge case in tests

- Update every reference when refactoring

- Document every function

Thoroughness without fatigue.

Security context: Reviewing every code path for input validation. Checking every API endpoint for authentication. Ensuring every detection rule has corresponding test cases.

Pattern Recognition and Application

Agents excel at recognizing and applying patterns.

- "This looks like the pattern in X"

- Apply consistent structure across components

- Match existing code style

- Follow established conventions

They've seen millions of examples. Pattern matching is what they do best.

Availability and Patience

Agents are always ready, never frustrated.

- 3 AM debugging session? Ready.

- Explain this for the fifth time? Sure.

- Try a completely different approach? No problem.

- Generate another option? Instantly.

No ego, no fatigue, no frustration.

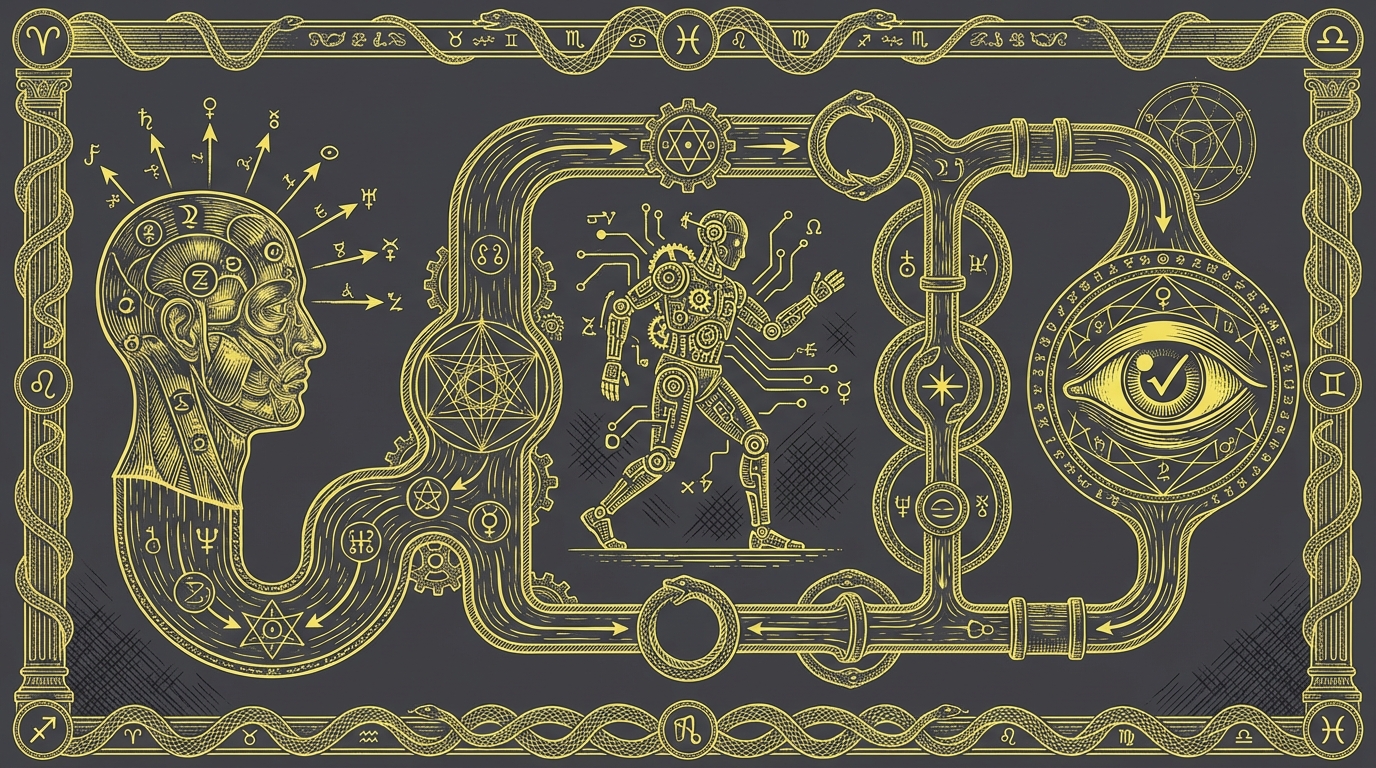

Partnership in Practice

Here's how the partnership flows for a typical feature:

Phase 1: Direction (Human-led)

You define what needs to happen: "We need rate limiting on API endpoints. 100 requests per minute per API key. Return 429 when exceeded. Use Redis for distributed counting."

Phase 2: Exploration (Agent-led)

Agent searches codebase for existing patterns. Proposes implementation approach. Identifies files that need changes. You review and adjust the approach.

Phase 3: Implementation (Agent-led)

Agent writes the rate limiter. Integrates with middleware. Adds tests. Updates documentation.

Phase 4: Verification (Human-led)

You review for edge cases. Check security implications. Verify production readiness. Approve or request changes.

Notice the flow: Human → Agent → Human. Direction, execution, verification.

Security Example: Building a Detection Rule

Phase 1: Direction

You: "We need to detect potential credential dumping via LSASS. Focus on Sysmon Event ID 10 where the target is lsass.exe. Filter out known legitimate callers like AV software."

Phase 2: Exploration

Agent: Searches for existing detection patterns in your repository. Reviews your Sysmon configuration. Identifies the baseline of legitimate LSASS access in your test data.

Phase 3: Implementation

Agent: Writes the detection rule. Creates test cases with both malicious and benign samples. Documents the detection logic and expected false positive sources.

Phase 4: Verification

You: Review the detection logic—does it catch the right behavior? Check the exclusions—are we filtering appropriately or creating blind spots? Validate against your environment—what's the expected alert volume?

The agent did the mechanical work. You provided direction and judgment.

Common Anti-Patterns

Full Delegation

"Build me a complete authentication system"

[Walk away]Why it fails: No architectural guidance. No security review. No constraint awareness. May not fit your needs at all.

No Delegation

"Add a log statement to line 47"

"Now change line 48 to..."

"Now run the test..."Why it fails: Not leveraging agent capability. Slower than doing it yourself. No benefit from AI.

Blind Trust

[Agent produces code]

"LGTM, ship it"Why it fails: Missing your judgment. Security risks. Quality degradation. You're still accountable for the outcome.

The Right Mindset

Think of the agent as a talented but new team member:

- Capable of excellent work

- Needs clear direction

- Benefits from feedback

- Requires review on critical code

- Gets better as you work together

You wouldn't give a new hire full access with no oversight. You also wouldn't micromanage their every keystroke. The partnership is in between.

Key Takeaways

Partnership, not replacement — Your role changed, not your importance.

Human strengths: direction, judgment, context, accountability — Things agents can't do.

Agent strengths: speed, coverage, patterns, availability — Things humans struggle to sustain.

Flow: Direction → Execution → Verification — Human, agent, human.

Avoid extremes — Neither full delegation nor micromanagement works.

The future isn't AI replacing humans or humans ignoring AI. It's humans and AI in deliberate partnership, each contributing what they do best.