Your agent generates a detection rule. It scores DNS queries, produces candidate tunneling domains, and maps query patterns to subdomain entropy. The code runs. The output looks reasonable.

Do you understand why it chose those entropy thresholds? Do you know what happens when a DNS log is missing the query type field? Can you explain what method it uses to distinguish word-list DGAs from legitimate long subdomains?

If not, you're not doing agentic threat hunting. You're vibe hunting, accepting output you don't understand because it looks right and the agent sounds confident.

If you don't understand what the agent is doing and why, you can't actually collaborate with it. You can't spot what it's missing. You can't catch the assumptions that don't hold in your environment. You can't contribute the kind of domain knowledge that makes the work better than what either of you would produce alone.

Agentic threat hunting means being a real partner in the process, not a rubber stamp. That's how you build a system that actually improves over time, where human insight and agent capability compound instead of one just carrying the other.

Where the Term Comes From

Simon Willison drew a sharp line in the AI-assisted programming debate: vibe coding means accepting code you don't understand. AI-assisted programming means using AI while maintaining understanding.

These aren't the same activity with different names. They have different risk profiles. The developer who reviews every line and can explain every function is doing something categorically different from the developer who shouts YOLO without reading the diff.

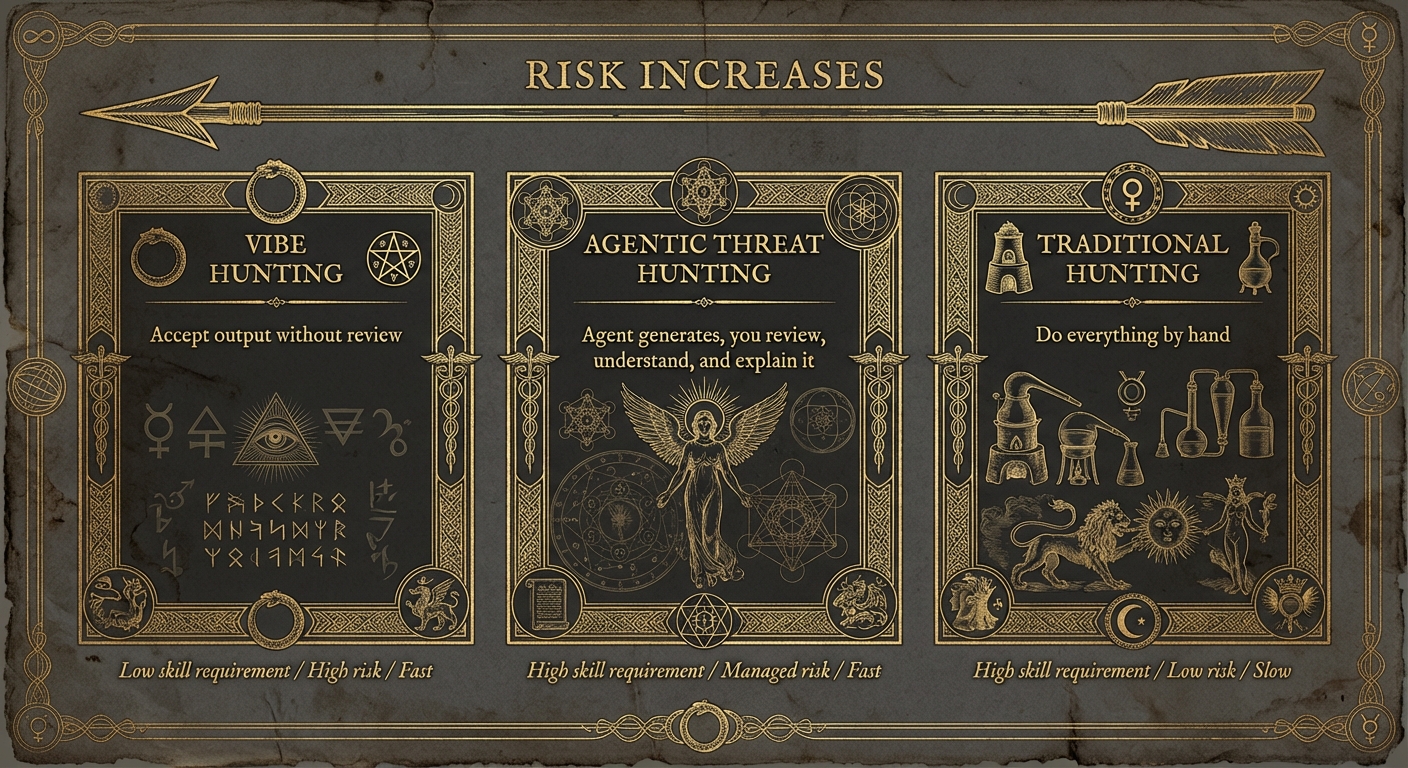

The same distinction applies to threat hunting. The defender who uses an agent to generate detection logic, generate hypotheses, enrich findings, correlate across telemetry sources, and draft assessments — but reviews the reasoning, validates the assumptions, and understands the methodology — is doing agentic threat hunting. The defender who asks the agent to "investigate this host" and forwards whatever narrative it produces is vibe hunting.

Why Vibe Hunting Is Tempting

Because it feels fast. You're shipping detections, pushing enrichments through the pipeline, generating assessments, clearing your backlog at a pace that would've been impossible six months ago. There's a genuine dopamine hit to that velocity.

But if you stop and reflect, you'll also realize the work is shallow. If someone challenges a finding, if a stakeholder asks why your assessment concluded lateral movement when the evidence could also indicate legitimate admin activity, you have no foundation to stand on. You didn't make that judgment call. The agent did, and you waved it through.

Beyond the pace illusion, the agent's output looks professional. Well-structured arguments, scoring conventions you'd expect, comments explaining the logic, even some edge case handling. At quick glance, on the surface, it looks legit.

A user agent anomaly detection that scores HTTP traffic by string entropy, frequency across the environment, and deviation from each host's baseline looks exactly like something a senior threat hunter might have written. The field names are plausible. The thresholds are in reasonable ranges. The math checks out.

But the entropy calculation treats every character equally. Real user agents have structured segments where version numbers like Chrome/124.0.6367.91 change with every update. The agent doesn't know the difference between that normal version bump and actual random garbage like kj3hf8 that a C2 implant generates.

The frequency threshold of "flag if fewer than 5 hosts use this UA" was invented, not pulled from your environment where maybe 200 hosts run a niche internal tool with a unique agent string. And the 24-hour baseline window means Chrome auto-updates Tuesday night and Wednesday morning you're triaging a hundred false positives because the new version string wasn't in yesterday's baseline.

Every one of those flaws is something a human who understands user agent structure would catch immediately. The agent doesn't know the domain well enough, but the output looks like it does.

The Golden Rule

Willison's principle translates directly: never act on agent output you can't explain to another analyst.

Not "reviewed." Explain. Reviewing is passive. Your eyes scan the output and your brain says "looks reasonable." Explaining forces you to construct a mental model of what each piece does and why.

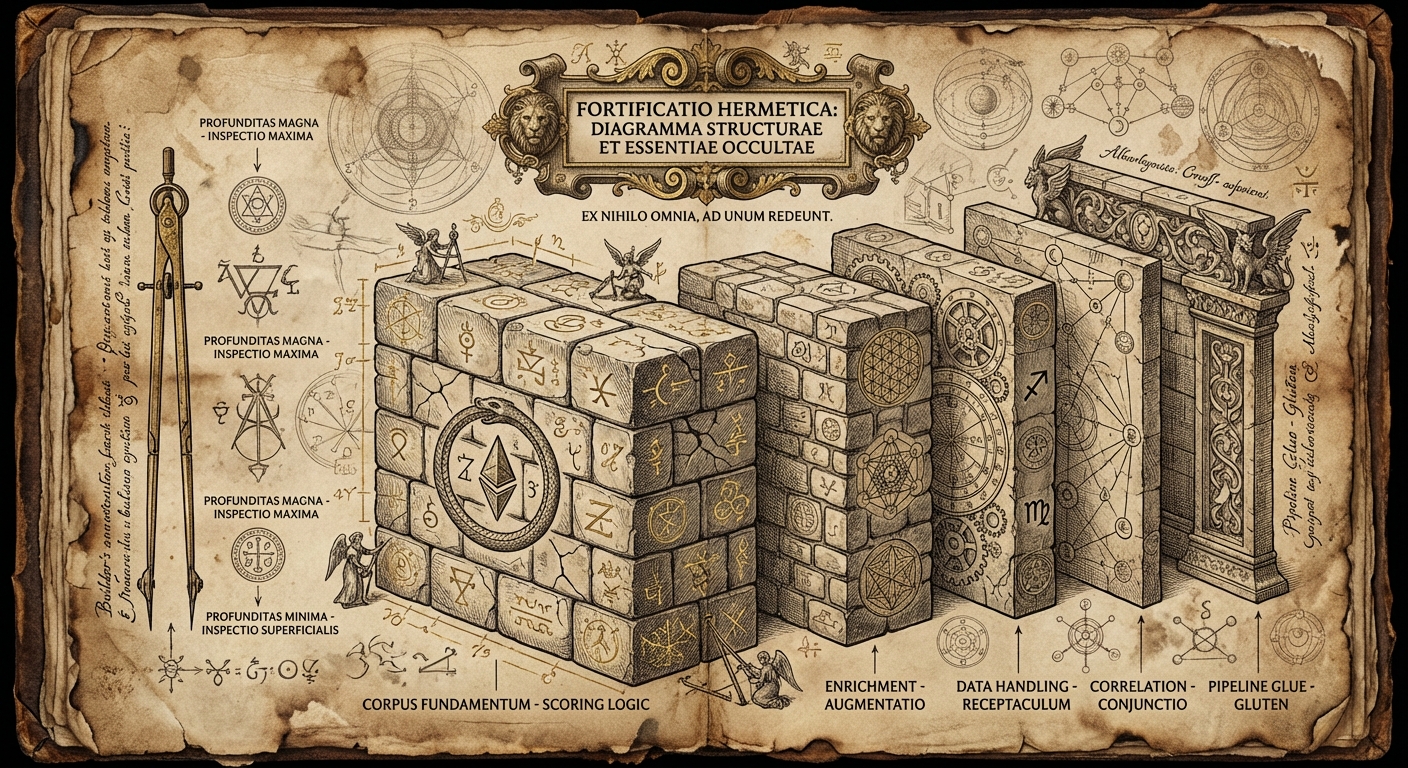

Whether it's a detection rule, an enrichment pipeline, a correlation across data sources, or a written assessment, you should be able to answer four questions.

What is this actually measuring or concluding? Not "it finds suspicious processes." For a detection: what specific attributes does it evaluate, and why do those indicate malicious behavior? For an enrichment: why did it pull from these sources and not others? For an assessment: what evidence supports this conclusion, and what alternative explanations were considered?

Why these thresholds or weights? Where did that entropy cutoff come from? Why did the correlation weight network evidence more heavily than endpoint telemetry? What changes if you adjust these values?

What are the blind spots? What would this miss? A detection calibrated for one adversary profile may be invisible to another. An enrichment that only queries one threat intel source has a single point of failure. An assessment that doesn't consider legitimate explanations isn't an assessment, it's a guess.

What happens at the edges? When a field is missing. When a host has no baseline. When enrichment times out. When the data volume is 10x normal. When the agent has to correlate across incomplete datasets.

If you can answer all four, ship it. If you can't, you don't understand the output well enough to own it.

When Vibe Hunting Is Fine

Willison doesn't condemn vibe coding entirely. There are contexts where accepting AI output without deep review is appropriate. The common thread: limited blast radius.

You're investigating a hypothesis and you ask the agent to score connections to a suspicious IP range so you can eyeball the results. The output is disposable. You're looking for patterns, not building production workflows. Vibe hunt freely.

You need to pull a specific dataset from your telemetry or enrich a set of findings with threat intel context. The agent writes the query or the enrichment logic, you run it, you look at the results. If it's wrong, you notice because you're actually reading them. No persistence, no automation, no risk.

You're exploring a new technique. You ask the agent to implement a JA3 fingerprint classifier or draft a correlation between DNS entropy and process execution trees so you can see how the approach works. You're studying the methodology, not deploying it. The purpose is understanding, and the sandbox is your attention.

Same goes for notebook analyses that don't feed into production, test environments with synthetic data, anywhere the output can't touch real operations.

The rule: if the output is disposable and the blast radius is zero, vibe hunt away. If the output persists, automates, or affects real operations, apply the golden rule.

The Five Risk Factors

Willison identifies five categories where vibe coding becomes dangerous: secrets, privacy, money, operations, and stakeholders. He's talking about software, but each one maps directly to DE&TH. Here's what they look like in our world.

Your enrichment module queries threat intel APIs with API keys. Vibe-hunted code might log the full request including the Authorization header, or hardcode credentials instead of reading from environment variables. One push to a shared repo and your API keys are in git history forever.

Your hunting pipeline processes network telemetry with IP addresses, hostnames, user identifiers, connection patterns. Vibe-hunted integrations could forward this data to unintended destinations. An enrichment module that sends your internal IPs to a third-party API you didn't vet is a data leak, even if nobody intended it.

Your agents call LLM APIs. A vibe-hunted retry loop without backoff or limits can burn through your API budget in hours. An enrichment module that queries a paid threat intel API for every connection instead of caching results can run up thousands in charges before anyone notices.

A vibe-hunted scoring rule deployed to production floods the SOC with false positives. A vibe-hunted correlation that joins network and endpoint data on the wrong key produces findings that look coherent but connect unrelated events. Nobody notices because nobody understands the logic well enough to know the output is wrong.

And when your agent's output feeds into incident response — detections, enrichments, triage decisions, written assessments — bugs have consequences beyond your personal inconvenience. A miscalibrated score means the wrong things get investigated. A flawed assessment narrative means IR teams chase ghosts while the real adversary moves laterally.

The Spectrum of Practice

This isn't binary. There's a spectrum between "accept everything" and "write every line by hand":

Most threat hunters using AI should be somewhere in the middle most of the time. The agent generates scoring logic, enrichment modules, correlation prompts, and assessment drafts. You review them. You understand them. You can explain them. You own them.

Writing every rule by hand is increasingly unnecessary for routine work. Vibe hunting is fine for exploration and learning, dangerous for anything production.

The danger zone is thinking you're in the middle when you're actually on the left. You "mostly understand" the suggestions. You "thoughtfully skimmed" the interpretations. You "trust the agent" because the last three outputs were fine. That's vibe hunting in denial.

The Collective Stakes

When vibe-hunted output fails in production — scoring rules that flood the SOC with noise, enrichments that miss context, correlations that connect unrelated events, assessments that fall apart under scrutiny — the entire practice of agentic threat hunting gets discredited.

Every failure story makes it harder for defenders who use AI responsibly to advocate for these tools. The skeptic who says "AI-assisted hunting doesn't work" doesn't distinguish between vibe hunting and careful agentic engineering. The backlash hits everyone.

Applying the golden rule, never deploying output you can't explain, protects your own operations. It also protects the legitimacy of the tools and practices that make threat hunters more capable.

The agent produced the output in 30 seconds. The review might take 10 times as long, but it makes the difference between work you own and work that owns you.